Demo time!

The low-fi approach

Relocalizing data collection

- Sometimes you don't need a server

- We are rarely doing BigData™

- Let's put the researcher at the center so they can control their data

A programmatic API

Jupyter's back y'all!

from minet import multithreaded_fetch

for result in multithreaded_fetch(urls_iterator):

print(result.status)

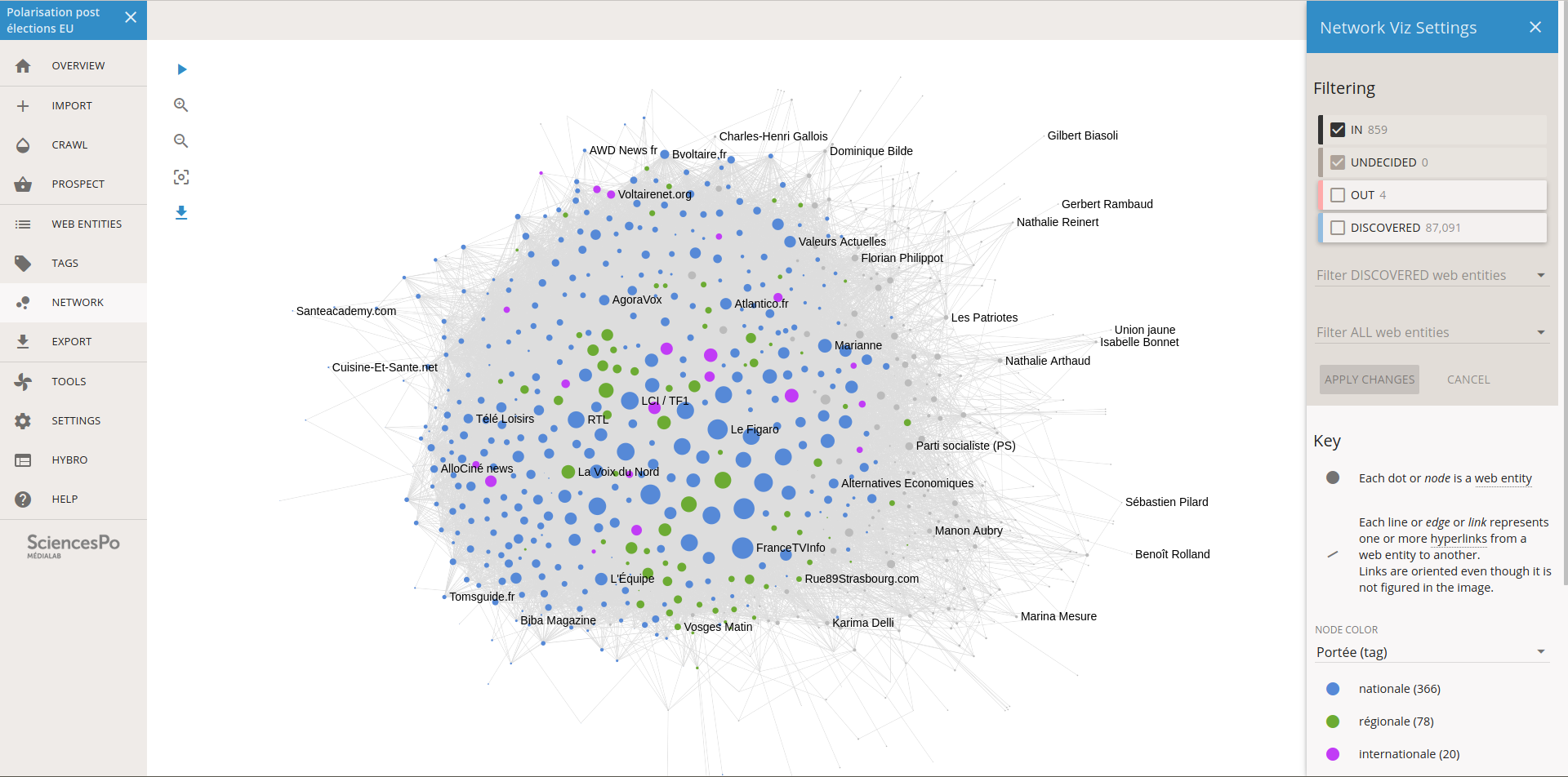

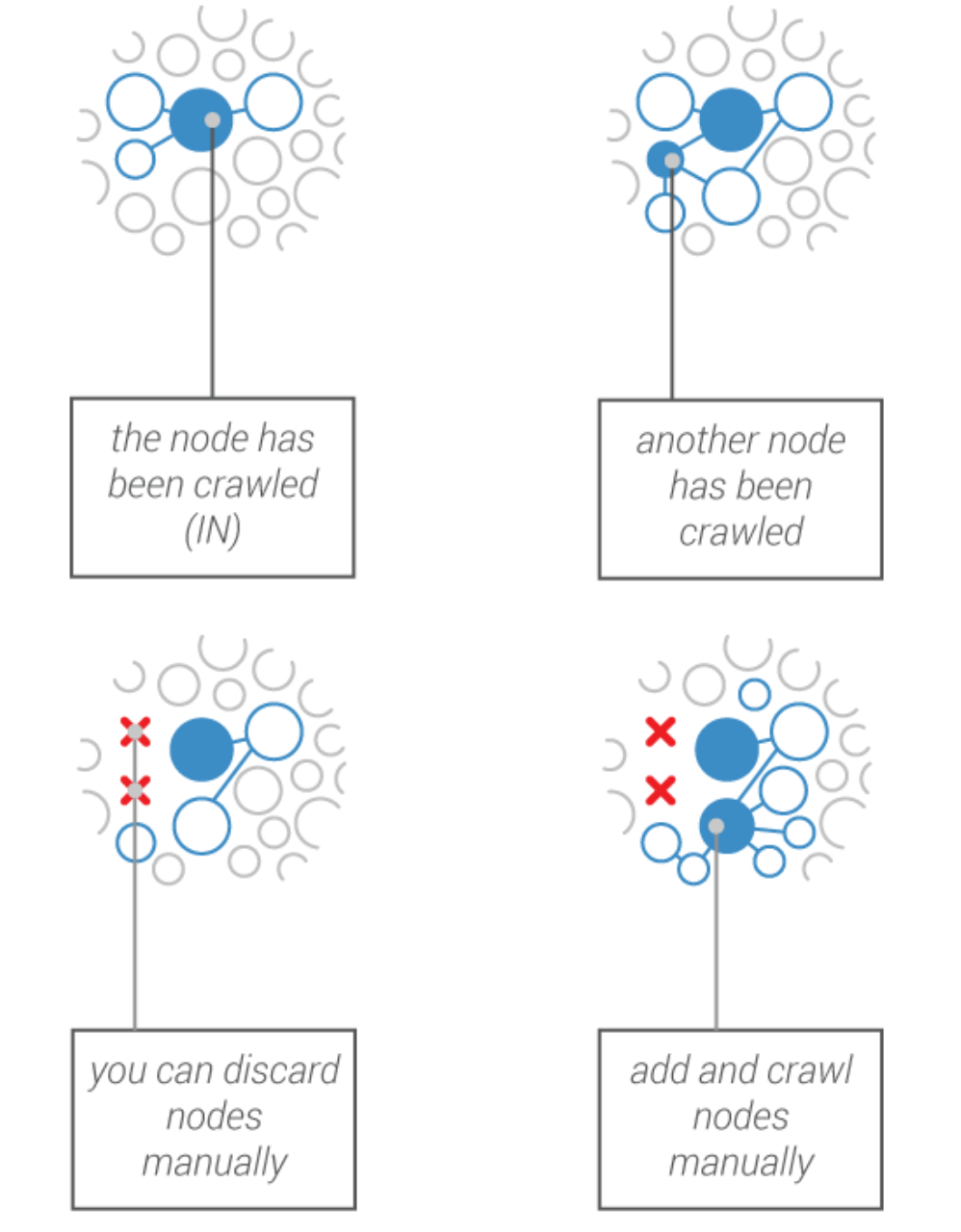

How to enable researchers to crawl the Web?

A dedicated interface

Serving a robust methodology

Non-trivial technical challenges

Trade-off between scalability & usability

We need to be able to design user paths.

The future!

What about a GUI for minet?

Thank you for listening!